Engineering at Wagon

February 13, 2015 | Mike Craig

Over the last few months, we’ve been user-testing a preview version of Wagon—a modern SQL editor that powers a Google Docs-style experience for analysts, engineers, and their teams. We’ve iterated on both our design process and technology choices to build a tool that we and our users rely on every day.

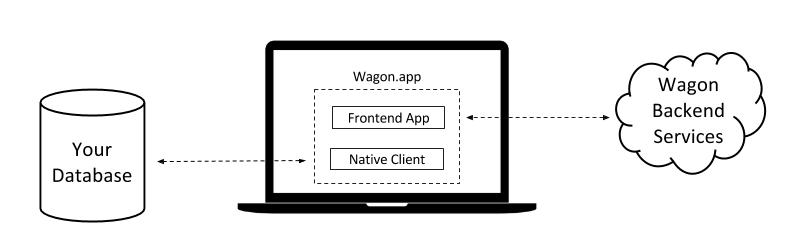

Wagon takes advantage of cloud-local computing, combining the strengths of remote servers like syncing, sharing, and storage with local client advantages like data locality and device-specific user interfaces. Our architecture has three components: a frontend app, a native client, and backend services.

We make it easy to get started. You can download Wagon—a hybrid web application and native client, like Slack—and connect it to an existing local, private, or public database. The frontend is a slick interface for writing and running SQL, interacting with large results, and generating statistics and pivot tables. The native client locally handles connections, manages query runs, and processes results as they return from the database. The results, statistics, and pivots are cached locally and can be used to create sharable charts. When shared, Wagon securely transfers data from the local machine to the backend, making it available to permissioned teammates.

Frontend App

We use HTML, CSS, and JavaScript to maintain a consistent user experience across platforms and environments. The frontend is a single-page application built with React and Flux. We bundle it with the native client as a desktop application, and we have an in-browser experience for sharing and collaboration.

Technology: Javascript, React, Flux, Ace

- React gives us declarative, reusable UI elements. React components compose like HTML elements with rich state handling, so we manage fewer event listeners and nested callbacks.

- Flux complements React and helps structure our frontend code. It separates logic, state, and UI into loosely coupled components, and has a straightforward data flow.

- Ace is an extensible, embeddable code editor. We use its syntax highlighting and have extended its autocomplete.

Native Client

Our users want to query their databases without any new IT or admin setup, so users connect via their local machine with their existing credentials. Wagon handles connections through the native client, since most databases require socket-level network access that isn’t possible directly from browser.

Exploration and analysis can be a time-consuming and tedious job with existing SQL tools. Each step is an additional database query subject to queuing, slow execution, and changing data. Our users want to quickly explore results and evaluate summary statistics without executing subsequent queries. To power that experience, the client also computes statistics and caches query results on the local file system.

We built the native client in Haskell. It’s a high level language with great libraries, static compilation, a high performance ceiling, and an active community. We use MacGap to bundle the client and the frontend into a downloadable OS X application (Windows soon!).

Technology: Haskell, MacGap

- Prototyping and refactoring is safe with Haskell’s type-driven development. The compiler helps us avoid bugs and edge cases as we iterate.

- Haskell’s opt-in control over compiler optimizations and strictness allows for incremental optimization. GHC includes a capable profiler, and the community maintains libraries for testing and performance measurement.

- MacGap is a lightweight (~1MB) web-to-native wrapper. It provides a JS API to integrate with the file system, subprocesses, and OS-specific features like notifications and menus.

*Note: Wagon is now powered by Electron rather than MacGap. Read our Electron blog post to learn more.

Backend Services

Our backend services persists state and shared data to allow users to securely collaborate on queries, results, and charts. The backend is also written in Haskell: using the same language for the client and the server helps us stay flexible and reduce complexity. We store application state in Postgres while larger immutable data, like shared query results, live in Amazon S3.

Technology: Haskell, Postgres, Amazon S3

- Haskell’s lightweight threads and the GHC runtime scale well on multi-core machines.

- Postgres is powerful, flexible, and straightforward to host. We use features like TOAST and JSON support to test new features.

There are exciting upcoming technology challenges including optimizing compute across local and remote environments, designing efficient streaming statistics algorithms, building features that learn and improve with usage.

We’d love to hear from you! Follow @WagonHQ, check out our stack on Stackshare, and learn more about our engineering and design team. Stay tuned for future engineering deep dives!

Thanks to Steve Pike and Doug Petkanics for reviewing this post. Image credit Adam Wilson from The Noun Project.