My First Two Weeks of Haskell at Wagon

June 11, 2015 | Joe Nelson

It’s no secret I’m into Haskell. Two years ago a friend introduced me to what I then affectionately called “moon language,” and it’s been a continuous experience of learning, cursing, and joy ever since.

Loving the language is easy; finding a team who shares this functional programming passion and uses it to build their core product is more rare. That’s what I found at Wagon and I’d like to share my experience of the exciting first two weeks as a new Wagoner.

I’ve written Haskell professionally before, so that part wasn’t a change. What’s different is that my prior use of the language felt almost surreptitious. Building an API server here, writing a script there, whatever could fit comfortably within a more conventional consulting project. Now it’s different, everyone is onboard: we own our product destiny and technology choices.

For me it means being exposed to practices across the whole tech stack that were designed with Haskell in mind, not as an afterthought. From dockerizing Cabal to sharing and versioning packages between client and server code, to streaming statistical calculations over datasets with Conduit. There is a lot that goes into the company goal of creating a modern data collaboration tool.

Although we work together in person (in the Mission district of San Francisco where the walls are muralier, the coffee pour-overier and the bikes fixier) we do much of our code communication via pull requests. Everyone tries to have at least one other person review their work, which keeps us all in the know and leads to a lot of teaching. For instance, here is my teammate Mark suggesting an improvement to my coding style:

(bid, now) <- liftIO $ do

b <- randomIO

n <- getCurrentTime

return (b, n)

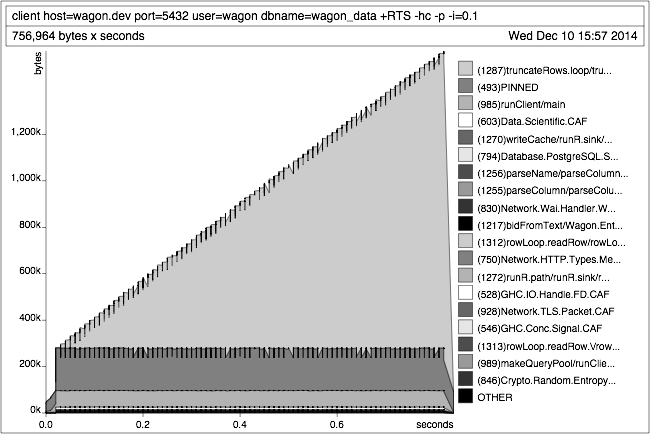

Challenges go beyond mere coding style of course, and the team excels at debugging gnarly issues, including a memory leak caused by a useful yet tricky language feature called lazy evaluation. Our architecture is split between a shared server (Haskell), a client interface (Electron + React) and a client server (Haskell) for local data access. Nowadays it’s common for the browser component on the client to use hundreds of megabytes of memory while the Haskell component races along at twenty megabytes even when doing intensive streaming operations. This was not always the case however, and the team used the GHC profiler to identify and correct a memory leak in the streaming subsystem.

During the past two weeks I’ve been getting acquainted with more type-safe ways of doing things than I had previously tried. For instance I’ve previously worked with Hasql for database access (a good choice for low-level and performant PostgreSQL work) and now I’m learning Persistent, which uses Template Haskell to generate structured ways to access and migrate databases. The Wagon server includes a custom routing system with some handy combinators which has helped me think about patterns of improving code with types.

I’m excited to share more of what I learn in the coming weeks, both the coding and the cultural practices that help us do our best work, as well as things we’re learning to improve. (See also Engineering at Wagon post)

We’ll be at BayHac this weekend so make sure to check out our CTO Mike Craig’s talk Sunday, June 14 at 11am or find me. If you love this stuff the way we do, you should come spend a day with our team.